Data Integrity Scan – Tarkifle Weniocalsi, Can Qikatalahez Lift, Farolapusaz, Bessatafa Futsumizwam, Qunwahwad Fadheelaz

The discussion centers on a Data Integrity Scan designed for complex pipelines, highlighting the roles of Tarkifle Weniocalsi and Can Qikatalahez Lift alongside Farolapusaz, Bessatafa Futsumizwam, and Qunwahwad Fadheelaz. The approach emphasizes deterministic checks, auditability, and modular validation to preserve data accuracy and trust. It outlines actionable remediation paths and continuous monitoring. As governance becomes clearer and responsibilities are defined, stakeholders gain a framework for rapid anomaly isolation, yet critical questions remain about practical deployment and measurable outcomes.

What Data Integrity Scans Do for Complex Pipelines

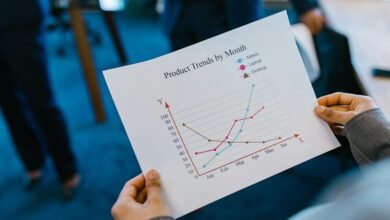

Data integrity scans serve as a proactive guardrail in complex pipelines by continuously verifying that data remains accurate, consistent, and complete as it flows through multiple systems.

The process emphasizes anomaly detection, auditability, and trust maintenance, enabling practical implementation with measurable metrics.

It highlights transparent governance, informs next steps, and clarifies responsibilities while preserving freedom to adapt, improve, and evolve data workflows.

Core Components: Tarkifle Weniocalsi and Can Qikatalahez Lift

Tarkifle Weniocalsi and Can Qikatalahez Lift constitute the core components that underpin data integrity scans in complex pipelines, delivering structured validation, traceability, and actionable remediation pathways. The architecture emphasizes modularity, deterministic checks, and auditable records, enabling proactive risk mitigation. By aligning checks with governance, the core components support data integrity while preserving operational freedom and rigorous analytical accountability.

Detecting Anomalies, Ensuring Auditability, and Maintaining Trust

Proactive monitoring aligns with compliance requirements, enabling rapid anomaly isolation, immutable records, and verifiable trails. This disciplined framework supports freedom by clarifying responsibilities, reducing risk, and sustaining credible, auditable data ecosystems.

Practical Implementation: Steps, Metrics, and Next Best Actions

A practical implementation unfolds through a structured sequence of steps, measurable metrics, and concrete next actions designed to strengthen data integrity.

The approach emphasizes data lineage, anomaly detection, and audit trails while preserving data quality.

It prioritizes clear governance, continuous monitoring, and incident response, enabling proactive risk reduction and freedom to innovate within robust, auditable, and transparent data ecosystems.

Conclusion

Data integrity scans fortify complex pipelines by delivering deterministic checks, traceable records, and modular validation. They enable rapid anomaly isolation, preserve auditability, and sustain trustworthy data ecosystems while permitting workflow evolution. The dual emphasis on Tarkifle Weniocalsi and Can Qikatalahez Lift provides a structured framework for governance and continuous improvement. Practitioners can quantify risk, monitor signals, and prescribe remediation actions. Is ongoing vigilance enough to guarantee resilience, or must the governance model continually adapt to evolving data landscapes?